Audio Synthesis in the Browser with Wax

Hello! I'm Michael Cella

he/they

aka nnirror

I build algorithmic art software and make art with it

MA in Media Arts

University of Michigan Department of Performing Arts Technology, 2025

What I make

Facet - live coding for audio, MIDI, OSC, images

Max for Live devices

Hardware / instrument hacking

Wax - web-based audio synthesis environment

Plan

- Wax: motivations, influences, concept

- Live-coded demonstrations

- Group performance

- Reflections

Wax.bz

Free, open source web application for audio synthesis

Runs in the browser - no installation

Influences

- Flow-based programming (Max, Pd, Reaktor)

- Modular synthesizers - audio building blocks

- Live coding tools accessible on the web (Strudel, Kabelsalat, Noisecraft)

- Modern web browsers - Web Audio API, WASM, AudioWorklets, RNBO from Cycling '74

Core Concept

- Add devices to a virtual workspace and connect them together

- All connections are digital audio signals (128-sample block rate)

- Save/share patches via URL or zip file

Example Device

Why Build Wax?

Sharing! Previous systems I built were hard to share.

- Max system: Tied to my specific setup, high CPU baseline, paid license

- Facet: Requires command line and local installation, powerful in weird ways but not super efficient

Goal: Build something with a low floor and high ceiling: immediately accessible, CPU efficient, live-codable

Use Cases

- Educational contexts: immediate access to audio synthesis concepts

- Ad hoc, mobile scenarios where desktop computers or synthesizers aren't available

- Multi-channel audio setups or installations

- Jamming :)

Let's build some stuff

Demo 1: Basic Audio Synthesis

- Adding oscillators and filters

- Connecting devices with patch cords

- Parameter control through HTML inputs

- Analysis with scope and spectrogram

Demo 2: User Interface

- Top navbar

- User interface elements for patching

- Key combinations

Demo 3: MIDI Input & Control

- MIDI note input from keyboards and controllers

- MIDI CC for real-time parameter control

- Pitch bend and modulation

Demo 4: Audio I/O & Multi-channel

- Live microphone input

- Loading audio buffers as file or URL

- Multi-channel audio output [demo video]

Demo 5: Real-time collaboration

- Click Collab -> create room or join an existing one

- All devices in the same room are updated when any device makes a change

- Influences: intersymmetric.xyz, nudel.cc, PastaGang

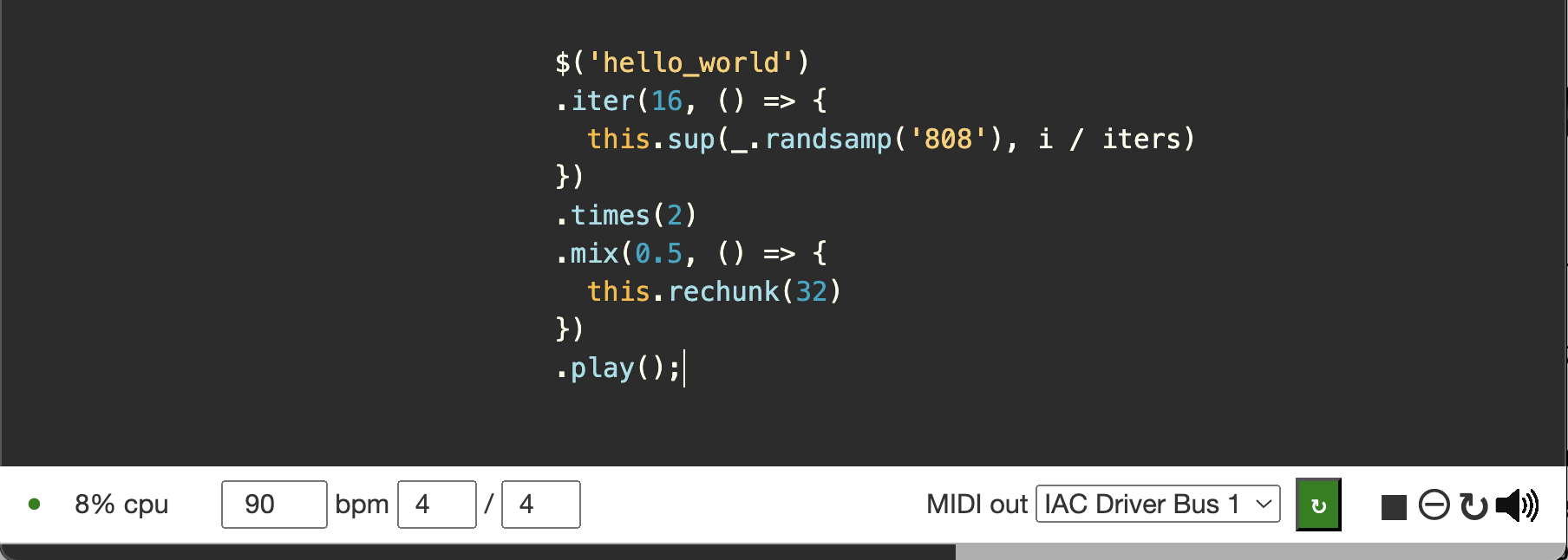

Demo 6: Live Coding with Facet

- <pattern> device: live coding inside Wax

- from tiny control data structures to audio-rate

- Make huge modifications to the DSP without having to change any connections

Group Performance Time

Let's become a distributed electroacoustic ensemble

Instructions

- Take out your phones!

- iPhone: Turn silent mode OFF

- Set volume to maximum

- Open QR code

- (might take a minute off wifi)

- Allow device motion access

The instructions on this screen will coordinate our actions and help us produce different sounds as a group

Embrace the chaos!

Let's go!

Stillness: Hold your phone as steady as possible so we can start from silence

As Loud as Possible: Shake your phone and move it around vigorously.

Up/Down: Follow the screen brightness. bright = up, dark = down

Randomize:Random new phone position when screen color changes, otherwise still

The Wave: Only move your phone when the line is on your side of the room. Otherwise keep it still

Figure 8: try repeating a figure 8 at a different speed than the person next to you

Figure 8: Change your speed up! Slow down or speed up

Fade out

Make a choice and point your phone:

- straight up, OR

- straight down, OR

- flat

Keep your phone still and wait until it stops making sound.

Stop audio by locking your phone or turning volume all the way down

We did it!

Reflections

Hybrid Audio Synthesis Paradigms

Text-based systems with user interfaces beyond text

Flow-based systems with codeboxes everywhere (Max 9.0)

Flow-based programming: Direct control of DSP in real-time, less flexible: big changes often require rewiring connections

Live coding: Higher abstraction of control into text, more flexible

Culture as Dynamic Documents

Miller Puckette: "Culture is increasingly transmitted in functionality and not in passive documents"

—"A Case Study in Software for Artists: Max/MSP and Pd," Art++, Editions Hyx, pp. 1–9, 2009.

Web-based art systems can be downloaded, modified, shared, and reconstructed in a matter of seconds

Shorter feedback loop between culture creation and distribution

Areas for improvement

"Undo" stack: HTML input elements have a default way of doing this, and getting that to play nicely with Wax devices has been tricky

Abstractions: base assumption when developing was 1:1 correspondence between devices and underlying audio graph. So, more for sketching

In both cases, these trace back to initial assumptions

Accessibility

- Digital Divide: Tools often end up accessible only to those already tech-savvy

- Tower of Babel: Custom systems gain complexity over time and become hard to document and explain

- Free and open source doesn't guarantee accessibility: design, documentation, examples, tutorials, and feedback are crucial

The work is never over

Iteration is the secret!

Huge accumulations of subtle decisions

Without feedback from many other people, Wax would not have been nearly as successful

"Instant music, subtlety later"

—Cook, P.R. (2001). Principles for Designing Computer Music Controllers

Evergreen lessons from building systems

You will forget and neglect things

Because you must go deep AND wide in order to build it

Get comfortable with a form of incompleteness

Playful approaches to technology

How will children remember today's technology?

Modern phones are insanely powerful. Want to transmit 8-channel audio to a modular synthesizer from an iPhone? NO SWEAT

Most of the time, however, phones don't feel fun

It is important to imagine other futures

Thank you!

nnirror.xyz